Monitoring a Dockerized ELK Stack with Prometheus and Grafana

February 16, 2017

The ELK Stack is today the world’s most popular log analysis platform. It’s true.

Trump and humor aside — and as we’ve made the case in previous posts — running an ELK Stack in production is not a simple task, to say the least, and it involves numerous challenges that consume both time and resources. One of these challenges involves monitoring the stack — understanding when your Elasticsearch cluster is stretched to the limit and when your Logstash instances are about to crash.

That’s why if you’re running ELK on Docker, as many DevOps team are beginning to do, it’s imperative to keep tabs on your containers. There are plenty of monitoring tools out there, some specialized for Dockerized environments, but most can get a bit pricey and complicated to use as you go beyond the basic setup.

This article explores an alternative, easy and open source method to monitor a Dockerized ELK: Using Prometheus as the time-series data collection layer and Grafana as the visualization layer.

Prometheus has an interesting story. Like many open source projects, it was initially developed in-house by the folks at SoundCloud, who were looking for a system that had an easy querying language, was based on a multi-dimensional data model, and was easy to operate and scale. Prometheus now has an engaged and vibrant community and a growing ecosystem for integrations with other platforms. I recommend reading more about Prometheus here.

Let’s take a closer look at the results of combining Prometheus with Grafana to monitor ELK containers.

Installing ELK

If you haven’t got an ELK Stack up and running, here are a few Docker commands to help you get set up.

The Dockerized ELK I usually use is: https://github.com/deviantony/docker-elk.

With rich running options and great documentation, it’s probably one of the most popular ELK images used (other than the official images published by Elastic).

Setting it up involves the following command:

git clone https://github.com/deviantony/docker-elk.git cd docker-elk sudo docker-compose up -d

You should have three ELK containers up and running with port mapping configured:

CONTAINER ID IMAGE COMMAND CREATED STATUS PORTS NAMES 9f479f729ed8 dockerelk_kibana "/docker-entrypoin..." 19 minutes ago Up 19 minutes 0.0.0.0:5601->5601/tcp dockerelk_kibana_1 33628813e68e dockerelk_logstash "/docker-entrypoin..." 19 minutes ago Up 19 minutes 0.0.0.0:5000->5000/tcp dockerelk_logstash_1 4297ef2539f0 dockerelk_elasticsearch "/docker-entrypoin..." 19 minutes ago Up 19 minutes 0.0.0.0:9200->9200/tcp, 0.0.0.0:9300->9300/tcp dockerelk_elasticsearch_1

Don’t forget to set the ‘max_map_count’ value, otherwise Elasticsearch will not run:

sudo sysctl -w vm.max_map_count=262144

Installing Prometheus and Grafana

Next up, we’re going to set up our monitoring stack.

There are a number of pre-made docker-compose configurations to use, but in this case I’m using the one developed by Stefan Prodan: https://github.com/stefanprodan/dockprom

It will set up Prometheus, Grafana, cAdvisor, Node Exporter and alerting with AlertManager.

To deploy, use these commands:

git clone https://github.com/stefanprodan/dockprom cd dockprom docker-compose up -d

After changing the Grafana password in the user.config file, open up Grafana at: http://<serverIP>:3000, and use ‘admin’ and your new password to access Grafana.

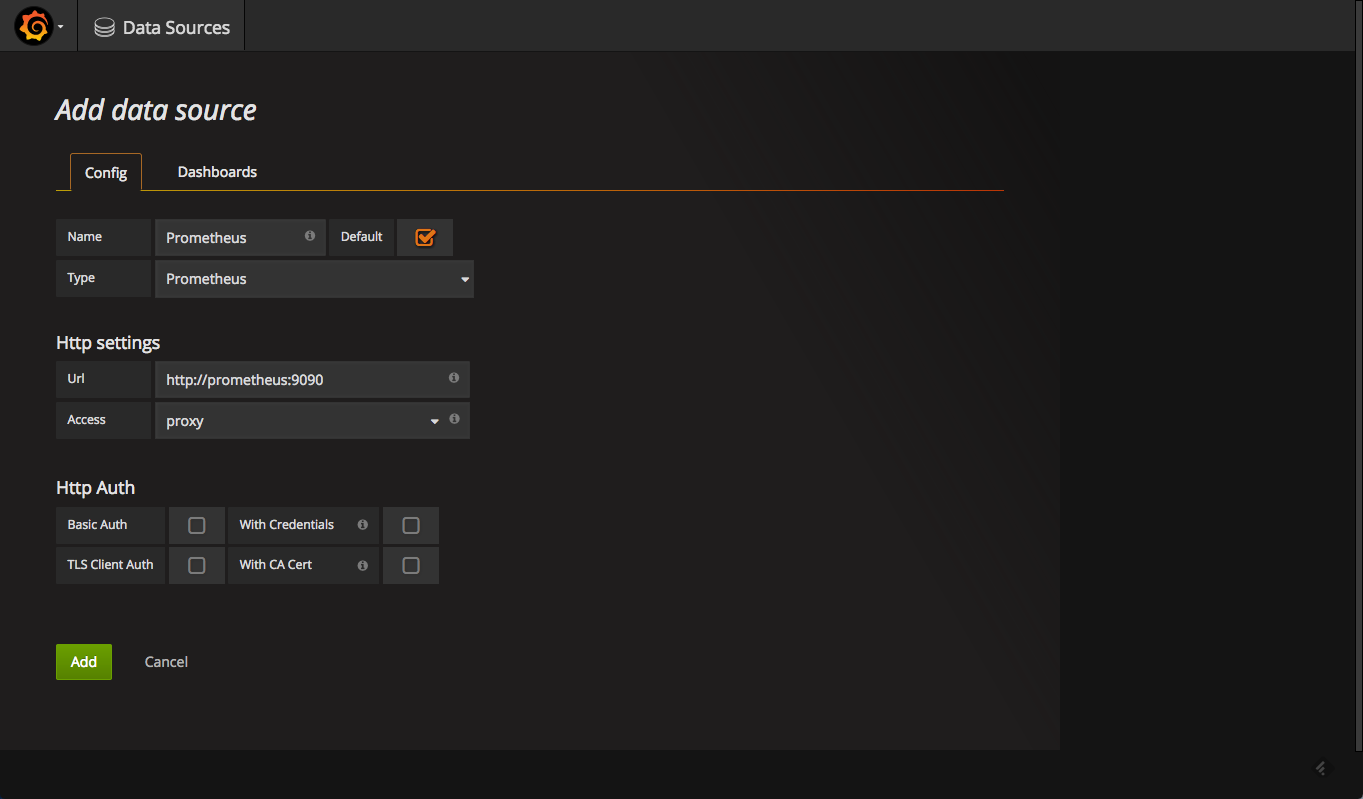

Defining the Prometheus Datasource

Your next step is to define Prometheus as the data source for your metrics. This is easily done by clicking Creating your first datasource.

The configuration for adding Prometheus in Grafana are as follows:

Once added, test and save the new data source.

Adding a Monitoring Dashboard

Now that we have Prometheus and Grafana set up, it’s just a matter of slicing and dicing the metrics to create the beautiful panels and dashboards Grafan is known for.

To hit the ground running, the same GitHub repo used for setting up the monitoring stack also contains some dashboards we can use out-of-the-box.

In Grafana, all we have to do is go to Dashboards -> Import, and then paste the JSON in the required field. Please note that if you changed the name of the data source, you will need to change it within the JSON as well. Otherwise, the dashboard will not load.

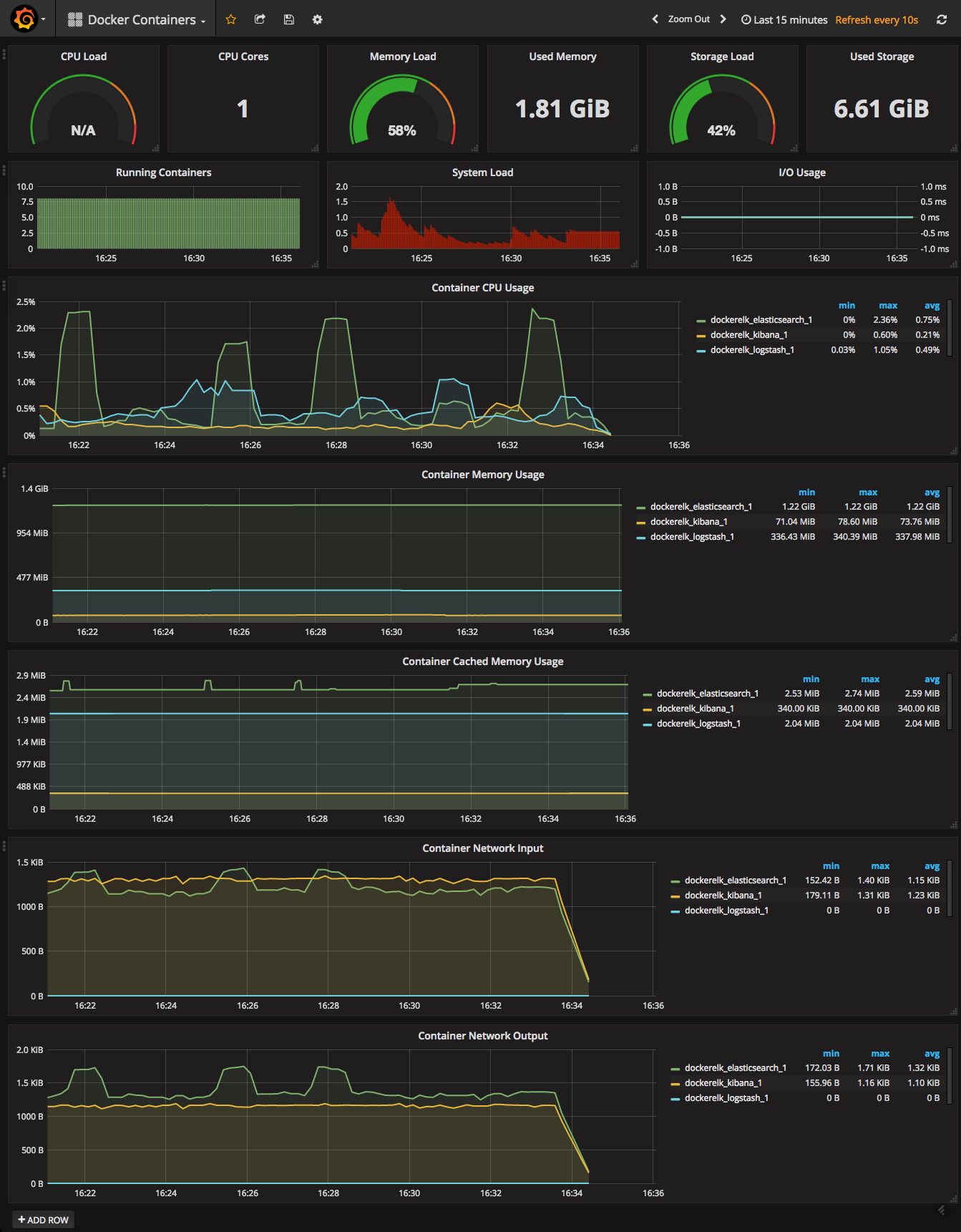

The Docker Containers dashboard looks like this:

It really is that simple — in a matter of minutes, you will have a Docker monitoring dashboard in which you will be able to see container metrics on CPU, memory, and network usage.

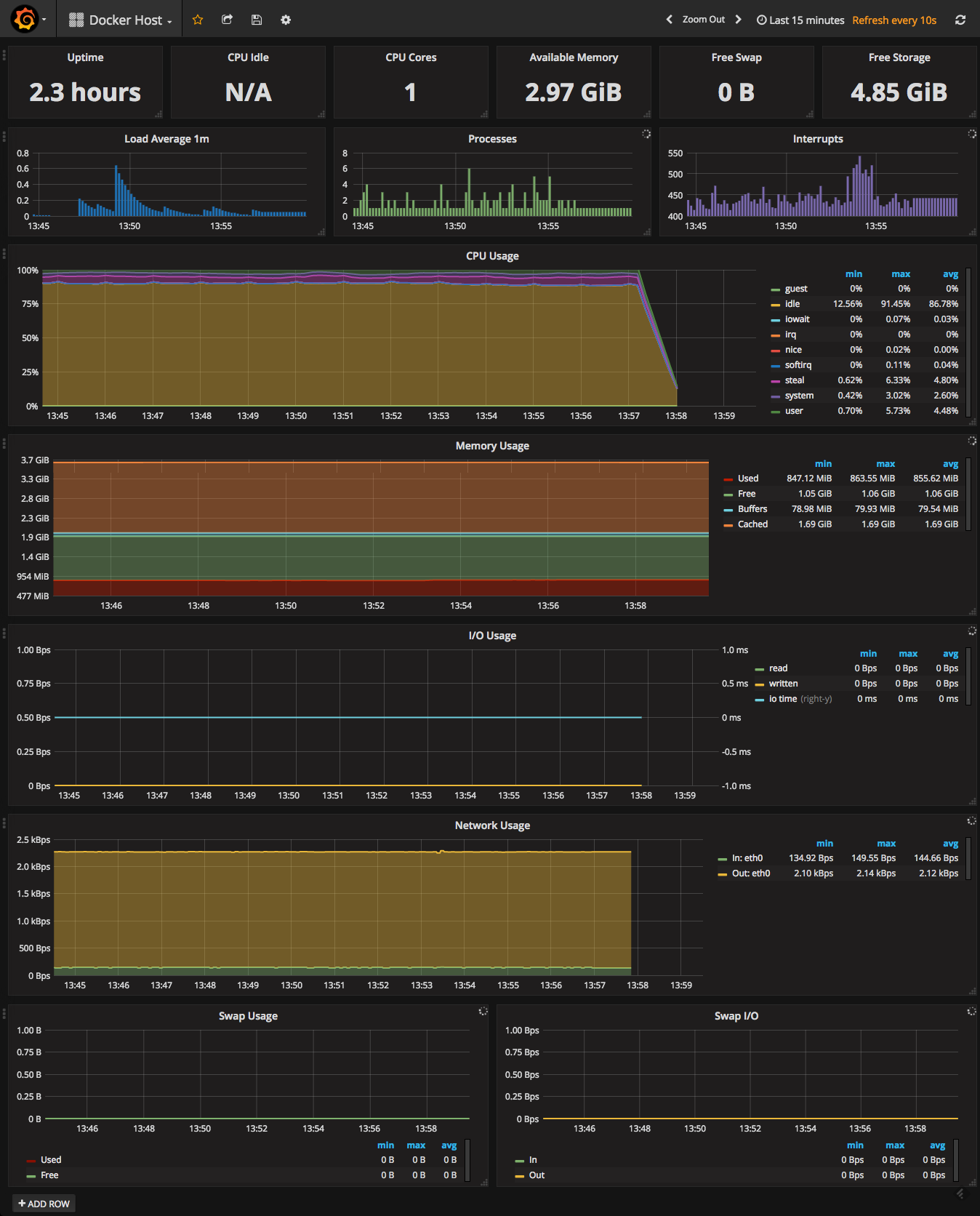

Another useful dashboard for your Docker environment is the Docker Host dashboard, which is also available in the same repo and uploaded the same way. This dashboard will give you an overview of your server with data on CPU and memory, system load, IO usage, network usage, and more.

Pictures are worth a thousand words, are they not?

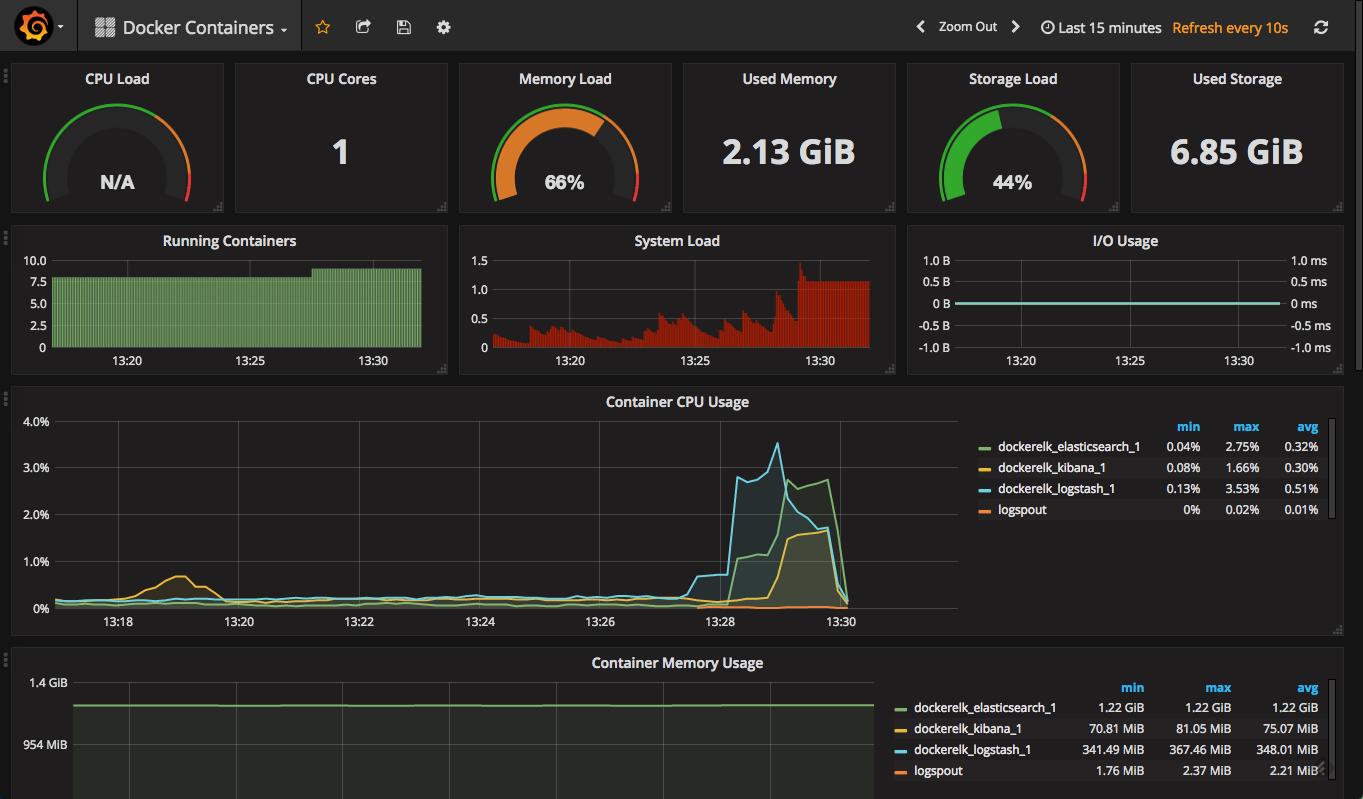

The flat lines in the screenshots reflect the fact that there is no logging pipeline in action. As soon as we establish a basic pipeline, we can see our Elasticsearch and Logstash containers beginning to pick up.

As you can see in the case below, I’m using logspout to forward syslog logs:

This, of course, is just a basic deployment — an Elasticsearch container handling multiple indices and documents will present with more graphs in the “red” — meaning dangerous — zone.

Summary

There are various ways of monitoring the performance of your ELK Stack, regardless of where you’re running it (for example, on Docker).

More on the subject:

In a previous post, Roi Ravhon described how we use Grafana and Graphite to monitor our Elasticsearch clusters. Since the release of ELK Stack 5.x, monitoring capabilities have been built into the stack and even Logstash, the performance bane of the stack, now has a monitoring solution included in X-Pack.

But if you’re using Docker, the combination of Prometheus and Grafana offers an extremely enticing option to explore for reasons of ease of use and functionality.

It’s definitely worth exploring, and if worse comes to worst — sudo docker rm.