Communication, collaboration and integration are the three main principles of the ever-growing, modern approach to software delivery known as “DevOps.” Coined in 2009 by Patrick Debois, the term (development and operations) is an extension of agile development environments that aims to enhance the process of software delivery as a whole. Of course, there is a lot of dispute about the correct DevOps definition.

DevOps is the Next Generation of Agile

Perspectives differ on where Agile methodology fits into DevOps (or, how DevOps fits into Agile). Some see it as DevOps vs. Agile while others see them as two sides of the same methodology coin. Still others would say Agile enables DevOps to exist.

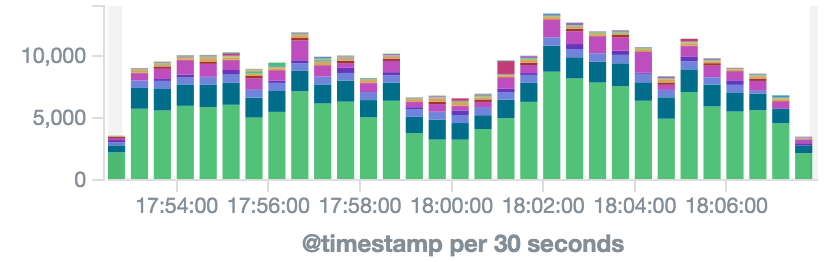

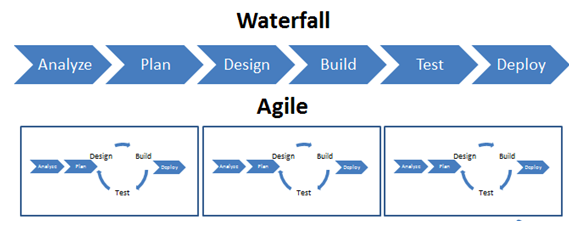

Back in 2009, more IT professionals started to move away from the traditional waterfall method and embrace nonlinear agile methodology by making each development stage independent and incorporating continuous testing early on and throughout the development cycle (see the second half of this guide for a glossary of terms that goes into more detail):

Consequently, this approach enhanced efficiency and reduced risk by allowing developers to make immediate changes before shipping to production based on the continuous feedback they received. While agile methods had always enhanced development, there was still a discrepancy in the flow when it came to deployment, which still embraced the waterfall methodology. While development used agile to lower risk and increase efficiency, deployment hung on to the linear waterfall structure, slowing down delivery and leaving testing to the end of the process — a process that wrongfully split ownership. This created huge bottlenecks in delivery cycles because developers would need to start from the beginning if a problem were discovered near the end of the deployment.

It was through seeing this disconnect between development and deployment as well as understanding the benefits of embracing agile in all aspects of software delivery that Debois came up with the notion of DevOps. The marriage of development and operations along with the extended best practices and principles associated with agile had the potential to greatly increase efficiency and lower delivery risks.

DevOps Requires a Cultural Change

DevOps is not a tool nor a technique. It’s really more of a cultural change. Change is feared throughout most organizations of any type, so the adoption of new methodologies can quite challenging. Therefore, it is vital first to define the business need that brought on the discussion on the potential change as well as the accompanying challenges. Nowadays, businesses are expected to quickly deliver flawless applications that focus on user experience, but without the right tools, applications, and behavior, this seemingly simple task can turn into a complicated mess. Ultimately, faulty delivery translates into missed business opportunities.

DevOps culture can live only in environments where everyone is on board with the philosophy. It takes the right technology, situational assessment, and attitude to pull off successful software development and delivery. If everyone in an IT organization is on the same page and understands the power of clear and consistent communication as well as the underlying business goals, then the sky’s the limit. Of course, having a wide skill set is beneficial to every aspect of the process, as long as those fortunate individuals are willing to be team players.

DevOps Needs Unified, Multi-Skilled Teams

The evolution of developer methodology in the last decade has seen the decline and emergence of different roles—from sysadmin to SRE, then from SRE to DevOps engineer. This career path is consequently common to see on the resumes of many people in the developer’s and engineer’s world, as the methodologies demanded and expectations expected of new hires evolves.

As mentioned above, collaboration, communication, and integration are the key elements of incorporating DevOps into any development and delivery setting. Building multi-skilled teams that are made up of individual talents (e.g., developers, sysadmins, and testers) can add great benefit, but without the right teamwork and attitude, the talent is virtually useless. When people know they can rely on everyone else, the group as a whole also moves much more quickly and efficiently, ultimately leading to happier customers.

The first step in a DevOps approach involves recognizing how software development, IT operations, and QA are mutually dependent on each other. As mentioned above, DevOps relies on cross-departmental collaboration and open communication between the key players in the software delivery pipeline in order to boost operational efficiency, predictability, and maintainability. Integrating and automating these elements early in the process enables teams to stream software delivery.

DevOps is the Future of Enterprise IT

Modern-day enterprise applications are riddled with complexities that keep growing from the use of different technologies, multiple databases, and various end-user devices, and DevOps might be the only viable way to cope with such diverse environments successfully.

A DevOps Glossary

The following are the terms and tools within the overall principles described above that successful DevOps engineers need to know:

The Cloud

IaaS: If you work in IT, you’ve heard of the public cloud. If you’ve heard of the public cloud, then you’ve heard of the leading cloud vendors such as Amazon Web Services (AWS), Microsoft Azure, and Google Cloud Platform (GCP). These are infrastructure as a service (IaaS) vendors that provide computing resources to customers via a public connection over the Internet in a virtualized environment. These resources include storage, bandwidth, virtual servers, load balancers, network connections, and IP addresses.

- Examples of vendors and tools: AWS, GCP, Azure, IBM SoftLayer, Digital Ocean

PaaS: Platform as a service (PaaS) enables developers to build applications and services on a cloud-based platform. PaaS offerings may require little to no client-side hosting expertise and include preconfigured features in simple frameworks. PaaS providers update their services on a regular basis with upgrades and new features and offer support to developers from the start through deployment. PaaS services are generally offered on a pay-per-use subscription basis.

- Examples of vendors and tools: Heroku, Google App Engine, AWS Elastic Beanstalk

SaaS: If you’re familiar with Google and Facebook, you’ve already been exposed to software as a service (SaaS). These cloud-hosted software applications have multiple uses for both individuals or organizations such as instant messaging, email, performance monitoring, accounting, and communications, and the tools can easily be accessed online. As opposed to purchasing traditional software applications that include licensing limitations, SaaS is subscription-based and applications are used online and hosted in the cloud.

Agile vs. Waterfall

The waterfall is a methodology that separates the various phases of software development and delivery (e.g., analysis, design, development, testing) and executes each phase in a linear manner. As a result, code may not be developed until a project is well on its way and the important phases of testing and quality assurance may be shortened or omitted altogether if there are delays in previous phases. If problems are brought to light in testing or QA, the software has to be recorded or go even further back in the development process.

Agile is a methodology that looks at business and software development projects in a nonlinear way, that is, consequently, more efficient. Testing is implemented early on and often so that developers can fix problems and make adjustments while they build, providing better control over their projects and reducing a lot of the risks associated with the waterfall methodology.

Integration and Delivery

Continuous Integration (CI) – developers integrate code into a shared repository multiple times a day and each isolated change to the code is tested immediately in order to detect and prevent integration problems.

Continuous Delivery (CD) – As an extension of CI and the next step in incremental software delivery, continuous delivery (CD) ensures that every version of the code that is tested in the CI repository can be released at any moment.

- Examples of vendors and tools: Jenkins, TeamCity, TravisCI, Electric Cloud, Go, Codeship, AWS CodeDeploy

Continuous Deployment – Continuous deployment can be thought of as an extension of continuous integration, aiming to have a new code deployed in production to be used by live users. Supported by CI, when tests meet the release criteria, it is released immediately.

Configuration Management (CM)

Resource Orchestration

When it comes to micro-services, service-oriented architecture, converged infrastructures, virtualization, and provisioning, the coordination and integration of computer systems is known as orchestration. By leveraging defined automated workflows, orchestration makes sure that business needs are aligned with your IT infrastructure resources.

Containers

Linux containers are lightweight virtualization components that run isolated application workloads. They run their own processes, file systems, and network stacks, which are all virtualized using the root operating system (OS) running on the hardware.

- Examples of vendors and related tools: Docker, CoreOs, Kubernetes, Mesos

Source (Version) Control

Version control includes practices and tools that help R&D organizations maintain and control changes within their source code repository. R&D members use source control tools to document and track system configuration files as well.

- Examples of vendors and tools: GitHub, Bitbucket, JFrog, Artifactory

Bug Tracking

A bug tracker is a system that aggregates and reports software bugs and defects. It helps R&D organizations with task management and is part of the consistent feedback loop that the DevOps methodology requires.

Test Automation

Test automation facilitates test engineer work by supporting multiple tests that run continuously. It enhances test coverage while supporting efficient release cycles. For example, test automation tools help manage, execute, and measure functional tests and load tests.

Unit Test – Unit testing is a process that allows testers to examine small parts of an application, such as a specific code or module. This test is usually automated and reused in order to support continuous testing and integration.

Monitoring

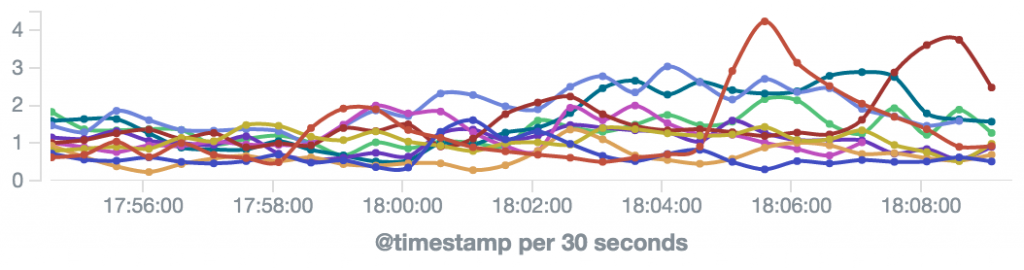

Monitoring is a primary element of IT performance management and is one of the most important aspects when operating online services. Monitoring tools are essential and provide crucial information that helps to ensure service robustness in terms of availability, security, and performance.

Application Performance Monitoring (APM) – APM allows you to automatically detect and be alerted about hotspots in your application framework that include the application and database layers.

- Examples of vendors and tools: New Relic, AppDynamics, DataDog

Infrastructure Monitoring – Tools in this category automatically detect and alert about degradations in underlying physical or virtual resource performance and availability.

- Examples of vendors and tools: AWS CloudWatch, Nagios, Zabbix, Icynga

Log Management

Log management (or log analytics) is the practice of dealing with large volumes of computer-generated messages. They can be operational messages (e.g., when tracking service performance or security) or for BI purposes (e.g., when tracking online user behavior).

- Vendors and tools: Logz.io (ELK), Splunk, Sumo Logic

For Future Reading on DevOps

DevOps is an extensive topic, and no single informational guide can cover every aspect of this culture change in the process of software delivery and deployment. So, we at Logz.io will be publishing a second, “Intermediate Guide” to DevOps in the future. Stay tuned!