Logging Docker Containers with AWS CloudWatch

November 21, 2016

One of the ways to log Docker containers is to use the logging drivers added by Docker last year. These drivers log the stdout and stderr output of a Docker container to a destination of your choice — depending on which driver you are using — and enable you to build a centralized log management system (the default behavior is to use the json-file driver, saving container logs to a JSON file).

The awslogs driver allows you to log your containers to AWS CloudWatch, which is useful if you are already using other AWS services and would like to store and access the log data on the cloud. Once in CloudWatch, you can hook up the logs with an external logging system for future monitoring and analysis.

This post describes how to set up the integration between Docker and AWS and then establish a pipeline of logs from CloudWatch into the ELK Stack (Elasticsearch, Logstash, and Kibana) offered by Logz.io.

Setting Up AWS

Before you even touch Docker, you need to make sure that we have AWS configured correctly. This means that we need a user with an attached policy that allows for the writing of events to CloudWatch, and we need to create a new logging group and stream in CloudWatch.

Creating an AWS User

First, create a new user in the IAM console (or select an existing one). Make note of the user’s security credentials (access key ID and access key secret) — they are needed to configure the Docker daemon in the next step. If this is a new user, simply add a new access key.

Second, you will need to define a new policy and attach it to the user that you have just created. So in the same IAM console, select Policies from the menu on the left, and create the next policy which enables writing logs to CloudWatch:

{

"Version": "2012-10-17",

"Statement": [

{

"Action": [

"logs:CreateLogStream",

"logs:PutLogEvents"

],

"Effect": "Allow",

"Resource": "*"

}

]

}

Save the policy, and assign it to the user.

Preparing CloudWatch

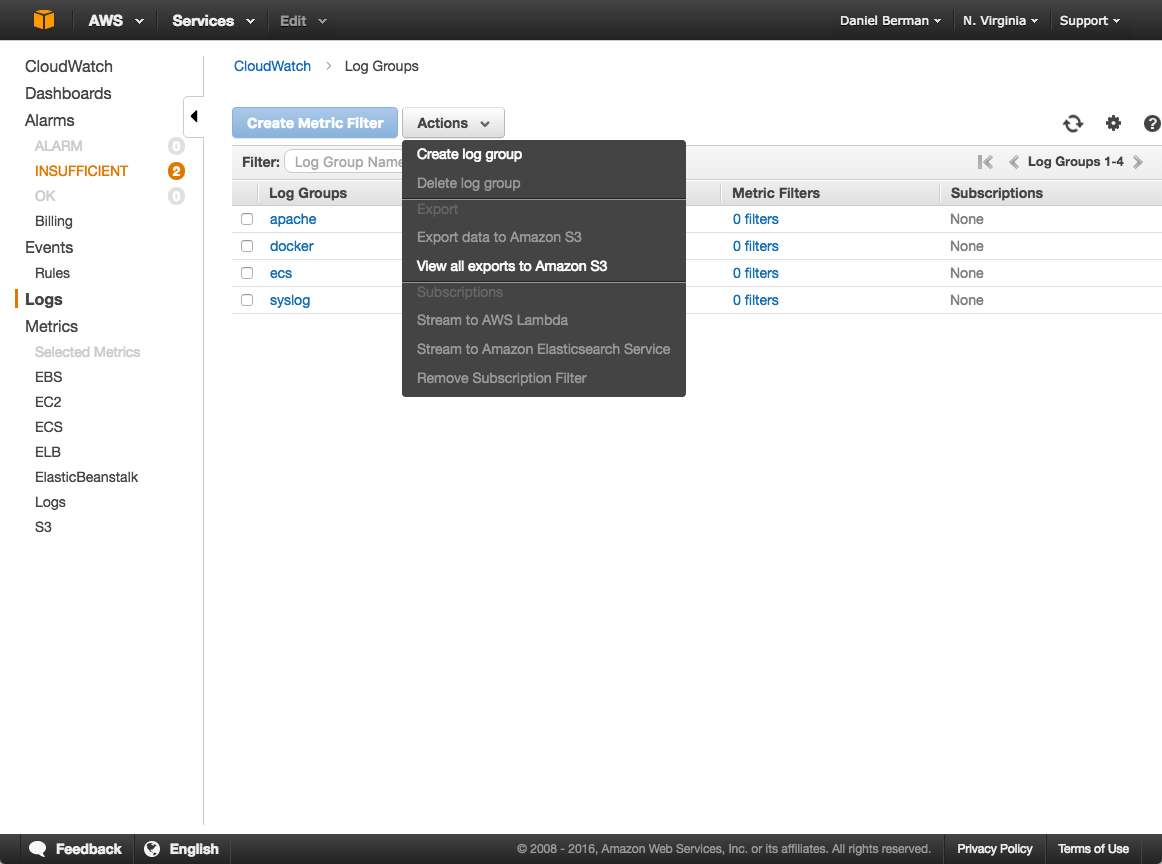

Open the CloudWatch console, select Logs from the menu on the left, and then open the Actions menu to create a new log group:

Within this new log group, create a new log stream. Make note of both the log group and log stream names — you will use them when running the container.

Configuring Docker

The next step is to configure the Docker daemon (and not the Docker engine) to use your AWS user credentials.

As specified in the Docker documentation, there are a number of ways to do this such as shared credentials in ~/.aws/credentials and using EC2 instance policies (if Docker is running on an AWS EC2 instance). But this example will opt for a third option — using an upstart job.

Create a new override file for the Docker service in the /etc/init folder:

$ sudo vim /etc/init/docker.override

Define your AWS user credentials as environment variables:

env AWS_ACCESS_KEY_ID= env AWS_SECRET_ACCESS_KEY=

Save the file, and restart the Docker daemon:

$ sudo docker service restart

Note that in this case Docker is installed on an Ubuntu 14.04 machine. If you are using a later version of Ubuntu, you will need to use systemd.

Using the awslogs Driver

Now that Docker has the correct permissions to write to CloudWatch, it’s time to test the first leg of the logging pipeline.

Use the run command with the –awslogs driver parameters:

$ docker run -it --log-driver="awslogs" --log-opt awslogs-region="us-east-1" --log-opt awslogs-group="log-group" --log-opt awslogs-stream="log-stream" ubuntu:14.04 bash

If you’d rather use docker-compose, create a new docker-compose.yml file with this logging configuration:

version: "2"

services:

web:

image: ubuntu:14.04

logging:

driver: "awslogs"

options:

awslogs-region: "us-east-1"

awslogs-group: "log-group"

awslogs-stream: "log-stream"

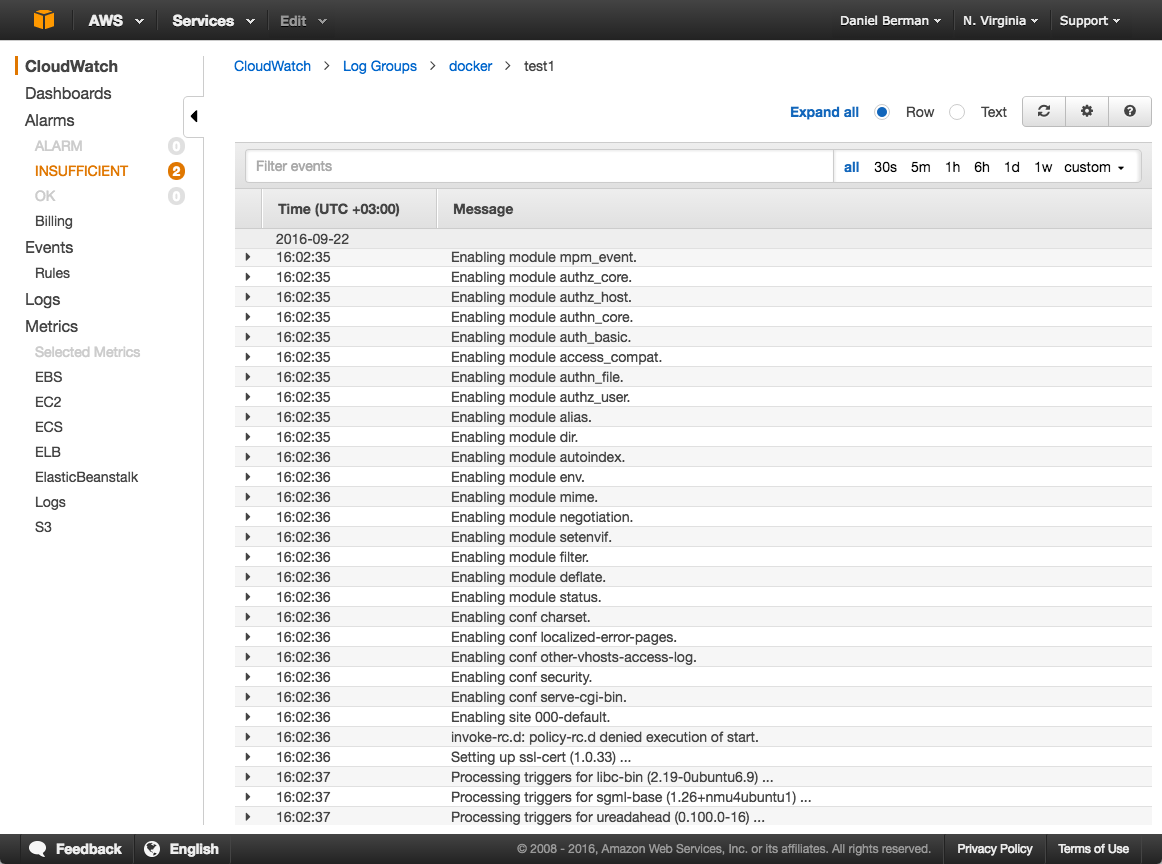

Open up the log stream in CloudWatch. You should see container logs:

Shipping to ELK for Analysis

So, you’ve got your container logs in CloudWatch. What now? If you need monitoring and analysis, the next obvious step would be to ship the data into a centralized logging system.

More on the subject:

The next section will describe how to set up a pipeline of logs into the Logz.io AI-powered ELK Stack using S3 batch export. Two requirements by AWS need to be noted here:

- The Amazon S3 bucket must reside in the same region as the log data that you want to export

- You have to make sure that your AWS user has permissions to access the S3 bucket

Exporting to S3

CloudWatch supports batch export to S3, which in this context means that you can export batches of archived Docker logs to an S3 bucket for further ingestion and analysis in other systems.

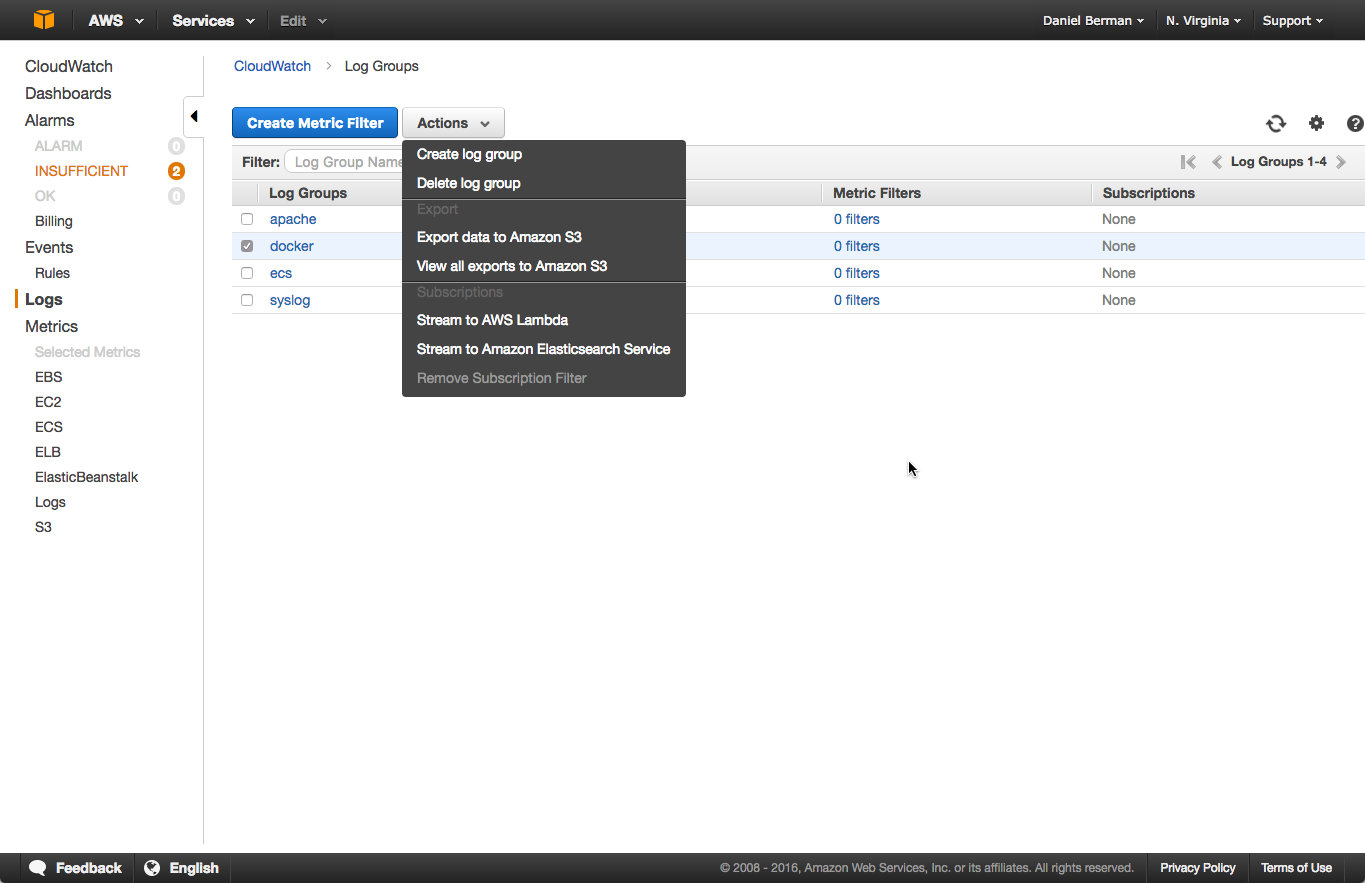

To export the Docker logs to S3, open the Logs page in CloudWatch. Then, select the log group you wish to export, click the Actions menu, and select Export data to Amazon S3:

In the dialog that is displayed, configure the export by selecting a time frame and an S3 bucket to which to export. Click Export data when you’re done, and the logs will be exported to S3.

Importing Into Logz.io

Setting up the integration with Logz.io is easy. In the user interface, go to Log Shipping → AWS → S3 Bucket.

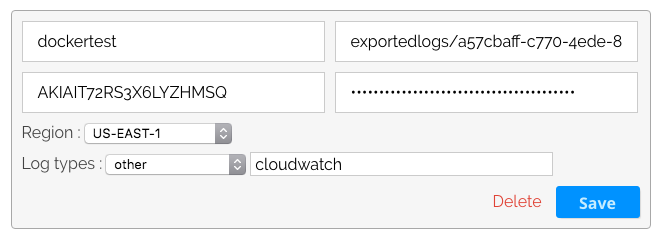

Click “Add S3 Bucket,” and configure the settings for the S3 bucket containing the Docker logs with the name of the bucket, the prefix path to the bucket (excluding the name of the bucket), the AWS access key details (ID + secret), the AWS region, and the type of logs:

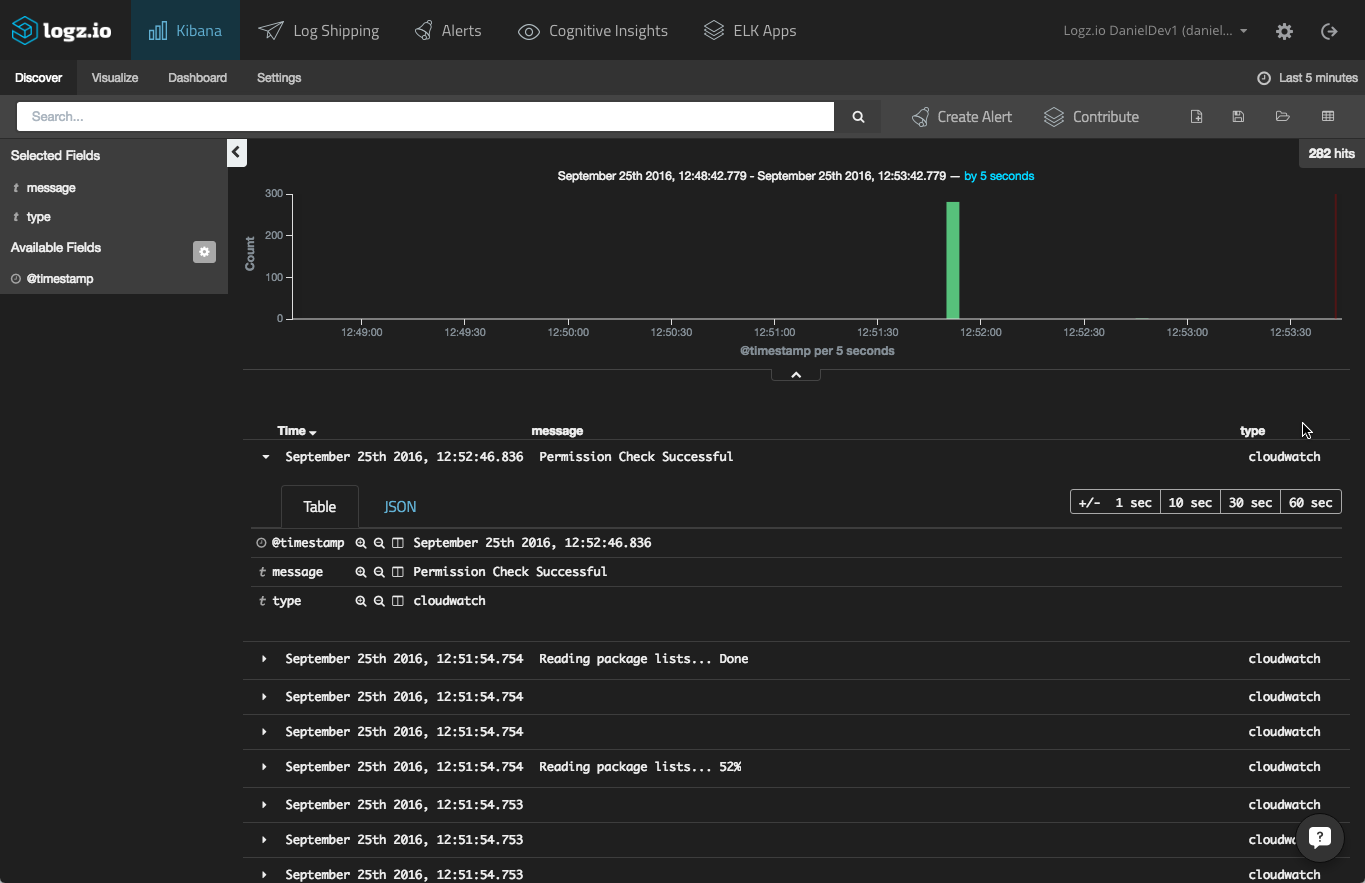

Hit the “Save” button, and your S3 bucket is configured. Head on over to the Visualize tab in the user interface. After a moment or two, the container logs should be displayed:

Using Logstash

If you are using your own ELK Stack, you can configure Logstash to import and parse the S3 logs using the S3 input plugin.

An example configuration would look something like this:

input {

s3 {

bucket => "dockertest"

credentials => [ "my-aws-key", "my-aws-token" ]

region_endpoint => "us-east-1"

# keep track of the last processed file

sincedb_path => "./last-s3-file"

codec => "json"

type => "cloudwatch"

}

}

filter {}

output {

elasticsearch_http {

host => "server-ip"

port => "9200"

}

}

A Final Note

There are other methods of pulling the data from CloudWatch into S3 — using Kinesis and Lambda, for example. If you’re looking for an automated process, this might be the better option to explore — I will cover it in the next post on the subject.

Also, if you’re looking for a more comprehensive solution for logging Docker environments using ELK, I recommend reading about the Logz.io Docker log collector.