What’s New in Logz.io – February 2018

February 22, 2018

2018 is well underway, and we’ve started the year with adding a bunch of new features and improvements. Additionally, we’ve also open sourced some of the underlying components of our architecture that help us ingest and process the huge volumes of data shipped to us and deploy all of these new goodies into production.

Let’s take a closer look.

Efficient log management

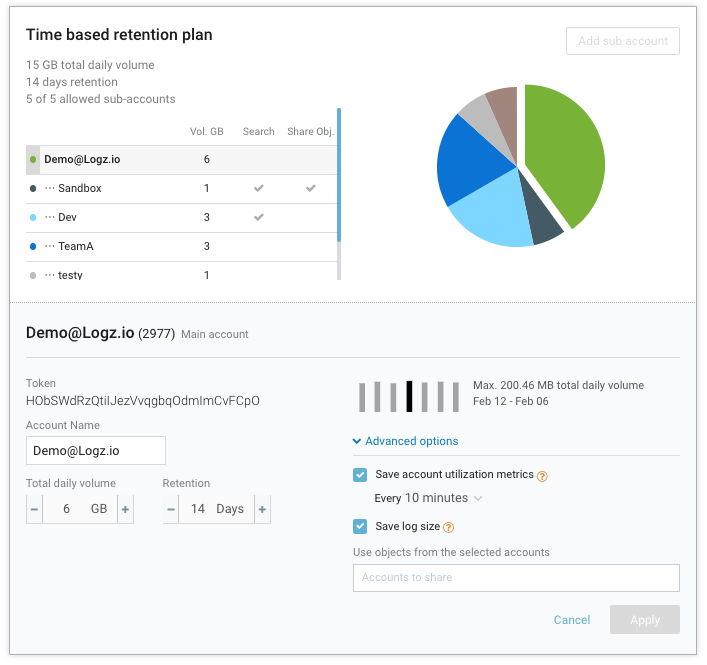

A revamped account management page (Settings → Manage Account) allows users more control and supervision over how much data is being shipped with two new advanced account settings.More on the subject:

Each account now has the option to save account utilization metrics on a set schedule (every 10, 30 or 60 mins). These metrics include the used data volume for the account as well as the expected data volume for the current indexing rate. Once recorded, you can use these metrics to manage your Logz.io environment more actively — create an alert should a certain threshold be exceeded or create a dashboard monitoring your data volumes.

Each account can now also save the log size for each log message. This adds a new field to your log messages and can be useful for periodically gauging which logs are perhaps too large.

New APIs

Being able to use Logz.io functionality via API is a big priority for us.

Last year we introduced the Search API, which allows our users to query their data using the Elasticsearch Search API DSL. Since then we have also added other APIs, including methods for handling alerts and CloudTrail data and working with Audit Trail (for archiving and compliance).

We are now happy to inform our users of additional API capabilities:

- Export/Import API – for exporting and importing Kibana objects. Useful for backup, archiving, and sharing objects across different environments, this API allows users to export or import Kibana searches, visualizations and dashboards.

- Snapshots API – for sending snapshots of Kibana dashboards and visualizations to email addresses or endpoints.

- Endpoint API – for programmatically creating, updating, reading and deleting endpoints. Logz.io endpoints are used for sending alerts and snapshots. You can now use APIs to create and manage built-in endpoints or custom endpoints.

You can see a list of the supported Logz.io API here.

Multiple alert severity

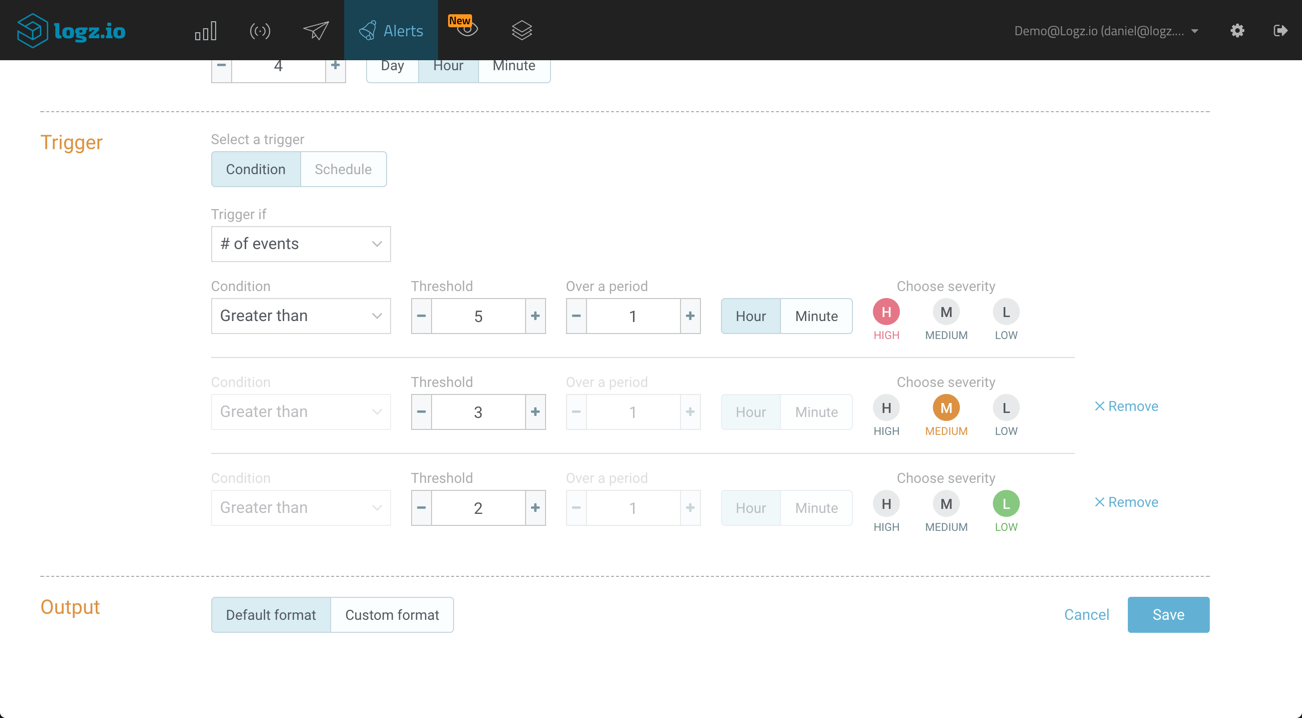

Logz.io offers an advanced built-in alerting mechanism that allows users to create log-based alerts that notify them, in real-time, when specific conditions are met.

We are continuously improving the underlying alerting engine and the wizard for configuring alerts, and have now added a new option for defining multiple severity levels for different thresholds within the same alert.

Say for example, you are analyzing Apache error responses, and would like to receive a different alert for different thresholds — a high-severity alert if more than five errors were logged, a medium-severity alert if more than three errors were logged, and a low-priority alert if more than two are logged:

Open sourcing Sawmill and Apollo

Providing ELK as a cloud service, Logz.io is built on top of open source technology. As such, we understand the importance of giving back to the community, and this month we announced the open sourcing of two projects that are used in our architecture — Sawmill and Apollo.

- Sawmill is a Java Library that enables data processing, enrichments, filtering and transformations. After some hard-earned lessons from using Logstash, Logz.io developed and implemented Sawmill in our data ingestion pipelines to ensure reliable and stable data ingestion.

- Apollo is a Continuous Deployment tool for deploying containers using Kubernetes, and was developed to help Logz.io continuously deploy components of our ELK-based architecture into production.

There are other tools and frameworks that we have developed and that are open source, and you can see a full list of these projects on GitHub.

As always, we’d love to get your feedback. So if you have any questions or ideas, feel free to let us know info@logz.io.

We’ve got some additional goodies on the way, so stay tuned for news and updates!