Dsco’s Journey Towards Enhanced Visibility

"Logz.io is a mission-critical service for us - providing us with a comprehensive monitoring package that our troubleshooting depends on."

Customer

Dsco

Industry

Internet

Headquarters

Lehi, UT

Products

Log Management

Headquartered in Lehi, Utah, Dsco is a leading inventory visibility software company that simplifies and standardizes the way trading partners connect and exchange data related to product inventory, purchase orders, invoicing, and shipping. Thousands of major retailers and brands use Dsco’s state of the art supply chain technology to expand and increase inventory visibility, build stronger partnerships, and obtain superior operational intelligence and efficiency.

Looking to scale effectively

Dsco’s application is written primarily in Java and is served by Apache web servers running on AWS. To monitor and troubleshoot the application, Dsco initially used graylog for collecting and analyzing the Apache log data and a more manual approach for the application logs. Dsco’s logging strategy is verbose in nature and includes logging debug and info messages. The logging pipeline resulted in extremely large datasets that pushed Dsco to explore a more automated and centralized approach.

Dsco’s engineering team tested the option of graylog for the Java application logs, but reached the conclusion that the costs involved in deploying the required infrastructure on AWS did not validate the concept.

After the initial pilot, we quickly understood we needed a solution that was both cost-effective and that could automatically scale together with the growth in log data we were expecting.

Brett Elsmore, DevOps Manager at Dsco

Dsco’s engineers also understood that to be able to stay ahead of events and effectively analyze the generated log data, traditional log analysis methodologies were not an option — Dsco needed a solution that could help the team proactively identify issues and even predict events.

Making the move to Logz.io

After exploring alternatives, Dsco decided to select Logz.io as the team’s primary logging solution. Logz.io’s automatic scaling meant the expected growth in log data was not a concern anymore. “More importantly”, claims Brett,

Logz.io’s built-in alerting mechanism and machine learning capabilities suited our approach to modern log analysis, and held the promise of helping us identify and mitigate critical events faster and more efficiently.

Getting on board with Logz.io was straightforward.

Dsco’s staging and production environments consist of over 200 AWS instances. On each of these instances, Filebeat is deployed as a log forwarder. Using log4j as the logging framework, Dsco’s Java services generated the log files which are tracked by Filebeat and shipped to Logz.io’s listeners.

Eliminating unnecessary logging noise

To ensure the company’s logging pipeline ships only relevant and required data, Dsco leveraged Volume Analysis — a machine-learning technology developed by Logz.io to identify recurring log patterns and cut out noisy and unnecessary logs.

Based on a report generated by Volume Analysis, Dsco was able to determine internally which logs could be discarded. Logz.io’s customer success and support teams then helped with implementing REGEX filtering in Filebeat. The end-result of this process was a more distilled monitoring environment and a reduction in the operational costs involved in logging.

Deploying a multi-layered monitoring system

Dsco’s engineers use a variety of methods to closely monitor the environment for critical events. Together, these methods provide the team with a solid safety net that ensures the company’s SLAs are met.

For starters, saved queries in Kibana are used to look for specific exceptions. For example, multiple uploads of the same invoice or failed serialization of a data object uploaded to the system by a user. These queries are also performed to monitor new code deployments and any related issue.

Second, a series of alerts are used to notify the team when something out of the ordinary is taking place. Dsco supports a variety of different APIs for creating, updating and retrieving objects and integrating with the system. Overall, close to 1 million API calls are made a day and closely monitored. Should an API call exceed 5 seconds, an alert is triggered and sent to PagerDuty for management by the on call engineer.

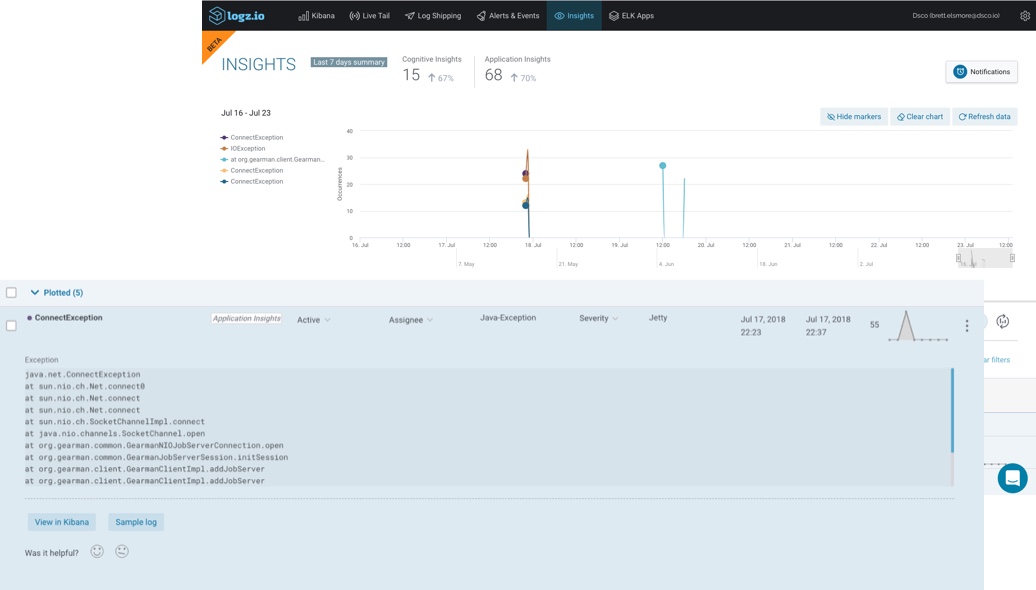

Application Insights, Logz.io’s machine learning technology for identifying new exceptions, is used to review, and if necessary — handle, events that may otherwise have gone unnoticed. In one such case, Application Insights flagged an RDS issue that indicated an attempt was made to write to a locked table in the database. If not handled, this would have resulted in a faulty order processing and potentially, revenue lost.

Image: A Java exception caught by Application Insights in Logz.io.

On the path to full visibility

Today, Logz.io is used by both the DevOps and development teams at Dsco to analyze over 100 GBs of log data a day.

Before Logz.io, Dsco was faced with the dual challenge of logging a large and growing dataset, and being able to extract actionable insights from it. The solution Dsco was looking for had to provide scalability, and had to be able to help the team move from a manual and traditional logging approach to an automated and proactive methodology.

Logz.io was selected for this exact reason, and played an integral part in the journey Dsco made in implementing a modern, centralized and predictive logging system. This system has resulted in enhanced visibility into the performance of the application and has ultimately helped Dsco cut troubleshooting time.

The visibility Logz.io has given us has helped us improve our mean time to repair. Logz.io is a mission-critical service for us, helping us understand the context, and providing us with a comprehensive monitoring package that our troubleshooting now depends on.

Brett Elsmore, DevOps Manager at Dsco