Best Practices for Managing Elasticsearch Indices

September 10, 2019

Elasticsearch is a powerful distributed search engine that has, over the years, grown into a more general-purpose NoSQL storage and analytics tool. While Elasticsearch can require some significant oversight to scale efficiently, there are capabilities that simplify ELK operations – one area that deserves special focus is Elasticsearch indexing and managing indices.

The way data is organized across nodes in an Elasticsearch cluster has a huge impact on performance and reliability. For users, this element of operating Elasticsearch is also one of the most challenging elements.

A non-optimized or erroneous configuration can make all the difference. While traditional best practices for managing Elasticsearch indices still apply, Elasticsearch has added several features that further optimize and automate index management. This article will explore several ways to make the most of your indices by combining traditional advice with an examination of the recently released features.

The concepts explained in this article also apply to OpenSearch – the forked version of Elasticsearch launched and maintained by AWS after Elastic close sourced the ELK Stack – which offer the same capabilities.

If you’re interested in logging with the ELK Stack/OpenSearch, but would rather not have to think about managing Elasticsearch indices altogether, check out Logz.io, which delivers a fully managed OpenSearch cluster and OpenSearch Dashboards instance. This way, you can focus on things other than Elasticsearch management.

All that said, this is an article about Elasticsearch, so lets get started. More on the subject:

Understanding indices

Data in Elasticsearch is stored in one or more indices. Because those of us who work with Elasticsearch typically deal with large volumes of data, data in an index is partitioned across shards to make storage more manageable. An index may be too large to fit on a single disk, but shards are smaller and can be allocated across different nodes as needed.

Another benefit of proper sharding is that searches can be run across different shards in parallel, speeding up query processing. The number of shards in an index is decided upon index creation and cannot be changed later.

Sharding an index is useful, but, even after doing so, there is still only a single copy of each document in the index, which means there is no protection against data loss.

To deal with this, we can set up replication. Each shard may have a number of replicas, which are configured upon index creation and may be changed later. The primary shard is the main shard that handles the indexing of documents and can also handle processing of queries.

The replica shards process queries but do not index documents directly. They are always allocated to a different node from the primary shard, and, in the event of the primary shard failing, a replica shard can be promoted to take its place.

While more replicas provide higher levels of availability in case of failures, it is also important not to have too many replicas. Each shard has a state that needs to be kept in memory for fast access. The more shards you use, the more overhead can build up and affect resource usage and performance.

Optimizations for time series data

Using Elasticsearch for storage and analytics of time series data, such as application logs or Internet of Things (IoT) events, requires the management of huge amounts of data over long periods of time.

Elasticsearch Rollover

Time series data is typically spread across many indices. A simple way to do this is to have a different index for arbitrary periods of time, e.g., one index per day. Another approach is to use the Rollover API, which can automatically create a new index when the main one is too old, too big, or has too many documents.

Elasticsearch Shrink

As indices age and their data becomes less relevant, there are several things you can do to make them use fewer resources so that the more active indices have more resources available. One of these is to use the Shrink API to flatten the index to a single primary shard.

Having multiple shards is usually a good thing but can also serve as overhead for older indices that receive only occasional requests. This, of course, greatly depends on the structure of your data.

Frozen indices

For very old indices that are rarely accessed, it makes sense to completely free up the memory that they use. Elasticsearch 6.6 onwards provides the Freeze API which allows you to do exactly that. When an index is frozen, it becomes read-only, and its resources are no longer kept active.

The tradeoff is that frozen indices are slower to search, because those resources must now be allocated on demand and destroyed again thereafter.

To prevent accidental query slowdowns that may occur as a result, the query parameter ignore_throttled=false must be used to explicitly indicate that frozen indices should be included when processing a search query.

Index lifecycle management

The above two sections have explained how the long-term management of indices can go through a number of phases between the time when they are actively accepting new data to be indexed to the point at which they are no longer needed.

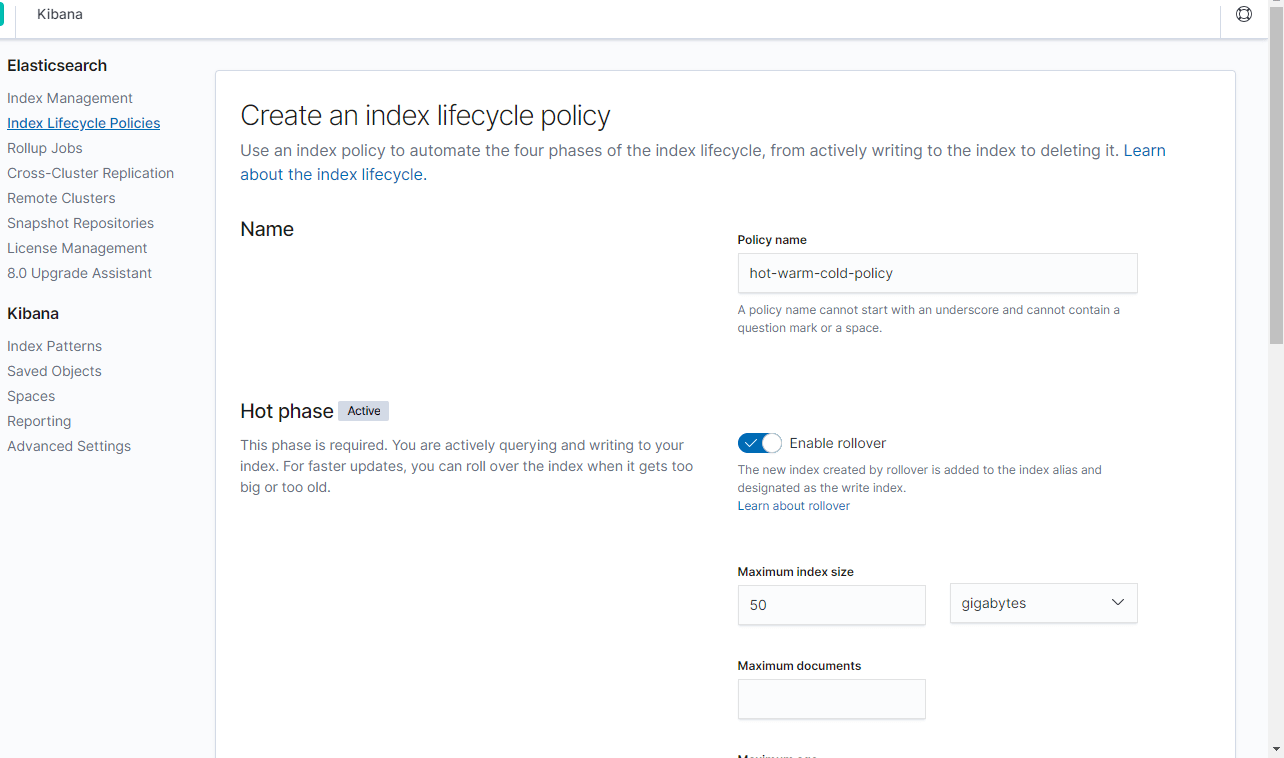

The Index Lifecycle Management (ILM) feature released in Elasticsearch 6.7 puts all of this together and allows you to automate these transitions that, in earlier versions of the E Stack, would have to be done manually or by using external processes.

ILM, which is available under Elastic’s Basic license and not the Apache 2.0 license, allows users to specify policies that define when these transitions take place as well as the actions that apply during each phase.

We can use ILM to set up a hot-warm-cold architecture, in which the phases as well as the actions are optional and can be configured if and as needed:

- Hot indices are actively receiving data to index and are frequently serving queries. Typical actions for this phase include:

- Setting high priority for recovery.

- Specifying rollover policy to create a new index when the current one becomes too large, too old, or has too many documents.

- Warm indices are no longer having data indexed in them, but they still process queries. Typical actions for this phase include:

- Setting medium priority for recovery.

- Optimizing the indices by shrinking them, force-merging them, or setting them to read-only.

- Allocating the indices to less performant hardware.

- Cold indices are rarely queried at all.

Typical actions for this phase include:

-

- Setting low priority for recovery.

- Freezing the indices.

- Allocating the indices to even less performant hardware.

- Delete indices that are older than an arbitrary retention period.

ILM policies may be set using the Elasticsearch REST API, or even directly in Kibana, as shown in the following screenshot:

Organizing data in Elasticsearch indices

When managing an Elasticsearch index, most of your attention goes towards ensuring stability and performance. However, the structure of the data that actually goes into these indices is also a very important factor in the usefulness of the overall system.

This structure impacts the accuracy and flexibility of search queries over data that may potentially come from multiple data sources and as a result also impacts how you analyze and visualize your data.

In fact, the recommendation to create mappings for indices has been around for a long time. While Elasticsearch is capable of guessing data types based on the input data it receives, its intuition is based on a small sample of the data set and may not be spot-on. Explicitly creating a mapping can prevent issues with data type conflicts in an index.

Even with mappings, gaining insight from volumes of data stored in an Elasticsearch cluster can still be an arduous task.

Data incoming from different sources which may have a similar structure (e.g., an IP address coming from IIS, NGINX, and application logs) may be indexed to fields with completely different names or data types.

The Elastic Common Schema, released with Elasticsearch 7.x, is a new development in this area. By setting a standard to consolidate field names and data types, it suddenly becomes much easier to search and visualize data coming from various data sources. This enables users to leverage Kibana to get a single unified view of various disparate systems they maintain.

Summary

Properly setting up index sharding and replication directly affects the stability and performance of your Elasticsearch cluster.

The aforementioned features are all useful tools that will help you manage your Elasticsearch indices. Still, this task remains one of the most challenging elements for operating Elasticsearch, requiring an understanding of both Elasticsearch’s data model and the specific data set being indexed.

As mentioned earlier, if you’d rather focus on other tasks, Logz.io delivers a fully managed OpenSearch experience. This enables you to use the most popular open source log management stack, without having to manage indices or any other data pipeline component yourself.

For time-series data, the Rollover and Shrink APIs allow you to deal with basic index overflow and optimize indices. The recently added ability to freeze indices allows you to deal with another category of aging indices.

The ILM feature, also a recent addition, allows full automation of index lifecycle transitions. As indices age, they can be modified and reallocated so that they take up fewer resources, leaving more resources available for the more active indices.

Finally, creating mappings for indexed data and mapping fields to the Elastic Common Schema can help get the most value out of the data in an Elasticsearch cluster.