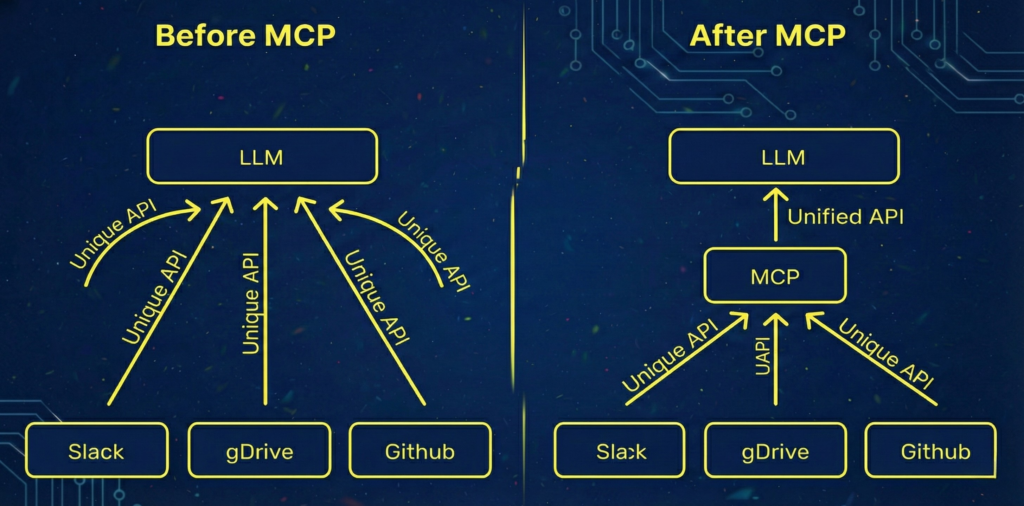

MCP (Model Context Protocol) is a standardized protocol for connecting LLMs to external tools, data sources, and application runtimes. It defines how context is structured, requested, and exchanged so models can interact with systems in a consistent, interoperable way.

Originally introduced by Anthropic, MCP aims to solve a growing problem in LLM application design: every model and tool integration previously required custom glue code, bespoke schemas, and one-off orchestration logic. MCP provides a shared contract that decouples models from the underlying tools and infrastructure.

Why MCP Matters

As LLM-powered systems evolve from chat interfaces into full AI agents and systems, models need structured access to external capabilities such as databases, file systems, APIs, and developer tools. Without a protocol, each integration becomes brittle and vendor-specific.

MCP standardizes:

- How tools describe themselves

- How models request tool execution

- How structured data is exchanged

- How context is incrementally extended

This reduces integration complexity, improves portability across models, and enables a more modular architecture for AI systems.

Core Architecture

MCP defines a client-server interaction model between three main components:

- Model runtime – The LLM that interprets prompts and decides when to invoke tools.

- MCP client – A mediator that translates model intent into structured protocol messages.

- MCP server – A tool provider that exposes capabilities via a defined interface.

The protocol does not require a specific model vendor. Any model that can produce structured tool calls can be connected to any MCP-compliant server. In fact, Anthropic contributed MCP to the Agentic AI Foundation (under the Linux Foundation).

This separation introduces a clean boundary between reasoning (model) and execution (tools).

Key MCP Concepts

Tool Declaration

Tools in MCP are declared with structured metadata. This includes:

- Name and description

- Input schema

- Output schema

- Capability constraints

This makes tool invocation explicit and machine-readable, reducing ambiguity in how arguments are passed.

Context Extension

MCP treats context as an evolving resource. Instead of sending the entire conversation state each time, the protocol allows incremental updates. This improves performance and reduces token overhead in long-running sessions.

Structured Invocation

Tool calls are expressed in structured form rather than free-text instructions. This enables:

- Validation against schemas

- Deterministic argument parsing

- Safer execution boundaries

The model proposes an invocation, and the MCP layer validates and executes it.

Transport Agnosticism

MCP does not mandate a specific transport layer. It can operate over HTTP, WebSockets, local IPC, or other communication channels. This flexibility allows it to run locally in developer tooling or remotely in cloud systems.

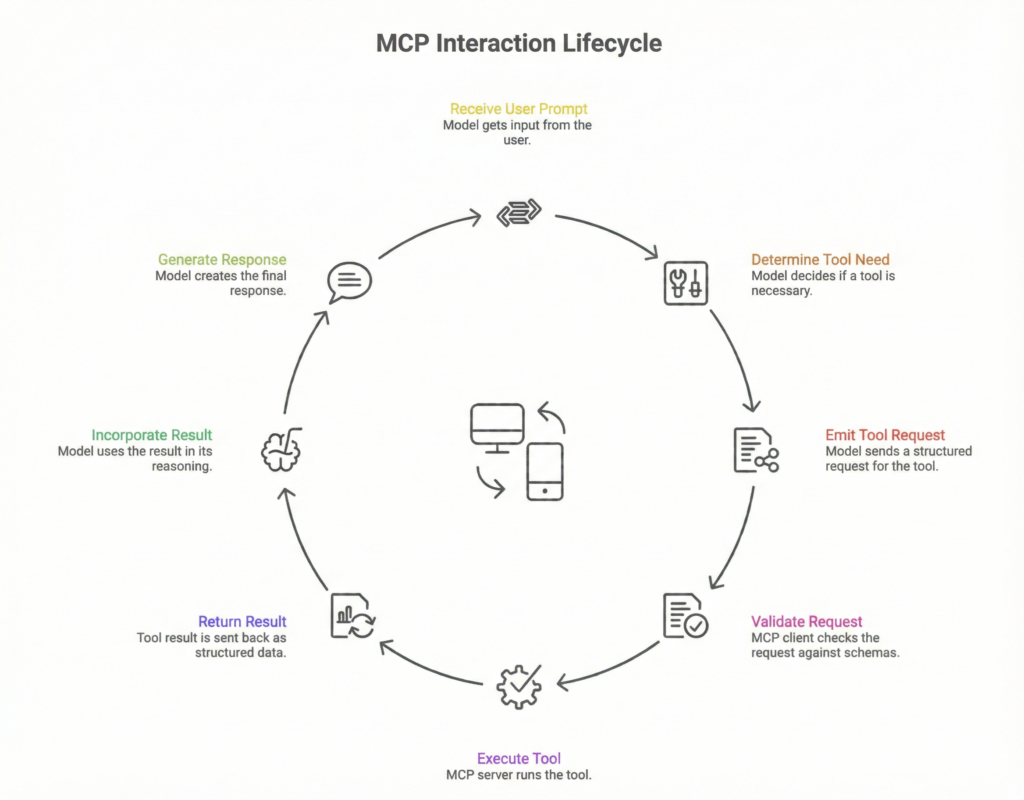

Execution Lifecycle

A typical MCP interaction follows this lifecycle:

- Model receives a user prompt.

- Model determines a tool is required.

- Model emits a structured tool invocation request.

- MCP client validates the request against declared schemas.

- MCP server executes the tool.

- The result is returned as structured data.

- The model incorporates the result into its reasoning and generates a final response.

This lifecycle formalizes what was previously ad hoc “function calling” logic.

Security Model

MCP introduces clearer trust boundaries between reasoning and execution layers by making tool interactions explicit and schema-validated rather than free-form. This structural clarity is itself a security property: when a model can only invoke declared tools with validated inputs, the attack surface is meaningfully smaller than in systems where models emit arbitrary code or shell commands.

That said, MCP defines where enforcement happens, not whether it happens,and that distinction matters for implementation teams.

Responsibility is layered across three zones:

The MCP client is the first enforcement point. It is responsible for validating that model-proposed invocations conform to declared schemas before anything reaches a tool server. If a model hallucinates an argument type or passes unexpected input, the client should reject it at this boundary. This is the most critical control layer because it sits between reasoning and execution.

The MCP server (tool provider) bears responsibility for its own execution scope. Even if a request passes client-side validation, servers should apply least-privilege principles internally, a file system tool shouldn’t expose paths beyond its declared scope, and a database tool shouldn’t allow queries outside its permitted schema. Servers should treat incoming requests as potentially adversarial, even from trusted clients.

The orchestration layer (if present) is responsible for higher-order controls: rate limiting tool calls, logging all invocations for auditability, and detecting anomalous usage patterns that might indicate prompt injection or runaway agent behavior.

Prompt injection is a specific, underappreciated risk. Because MCP tool results are fed back into model context, a malicious or compromised tool response could attempt to redirect model behavior in subsequent steps. This is not a flaw in the protocol itself, but it means that tool outputs should be treated as untrusted content. sanitized before re-injection into the reasoning loop where possible, and audited in production systems.

The protocol’s transport agnosticism also has security implications. An MCP deployment over local IPC carries very different threat assumptions than one over public HTTP. Teams must apply appropriate transport-layer security (TLS, authentication, network segmentation) based on deployment context — MCP does not prescribe this, so it must be designed deliberately.

What the protocol does provide is the structural precondition for all of this: explicit tool declarations, schema contracts, and a defined invocation lifecycle. Without that structure, enforcement is ad hoc. MCP makes it systematic.

Comparison To Traditional Function Calling

Before MCP, most LLM integrations relied on vendor-specific function calling APIs. These tightly coupled the model and tool layer.

MCP differs by:

- Decoupling tool providers from model vendors

- Supporting dynamic tool discovery

- Standardizing invocation semantics

- Enabling multi-tool orchestration via a shared interface

This shifts AI architecture from monolithic integrations to composable systems.

Design Trade-Offs

MCP introduces additional abstraction layers, which can increase complexity in simple applications. For small prototypes, direct function calling may be faster to implement.

However, for production systems with:

- Multiple tools

- Cross-model portability requirements

- Long-lived sessions

- Strict security controls

MCP provides clearer structure and long-term maintainability.

Implementation Considerations

When adopting MCP, engineering teams should:

- Define clear tool schemas – Avoid vague or overly permissive input definitions. Explicit contracts reduce unexpected behavior.

- Separate reasoning from execution – Treat tool servers as isolated systems. Avoid embedding business logic inside prompt templates.

- Monitor invocation patterns – Track which tools are used, how often, and under what prompts to refine system design.

- Plan for versioning – Tool schemas evolve. Version your MCP interfaces to avoid breaking existing integrations.

- Optimize context size – Use incremental context updates to minimize token usage in large workflows.

Limitations

MCP does not eliminate hallucinations. The model still decides when and how to call tools. Poor prompting or ambiguous tool descriptions can lead to incorrect invocations.

The protocol also does not standardize orchestration strategies. Complex workflows still require higher-level planning logic.

Finally, ecosystem maturity is evolving. Tooling, debugging frameworks, and observability standards are still developing.

Where MCP Fits in the Stack

MCP operates between the model layer and the infrastructure layer. It is not a model architecture and not an orchestration engine. It is a communication contract.

In a modern AI stack:

- The model handles reasoning.

- MCP handles structured tool interaction.

- External services handle execution.

- Orchestration layers coordinate multi-step workflows.

This separation enables cleaner system boundaries and better scaling patterns.

FAQs

No. MCP is designed to be model-agnostic. Any LLM capable of structured tool invocation can integrate with an MCP client, making it portable across vendors and runtimes.

It formalizes and generalizes them. Instead of relying on vendor-specific implementations, MCP provides a shared protocol that multiple models and tools can adopt.

No. Simple applications with limited tool usage may not benefit from the added abstraction. MCP becomes valuable when systems require modularity, portability, or complex multi-tool workflows.

Indirectly. It does not change model weights or reasoning ability, but it improves reliability in tool execution by enforcing structured validation and clearer boundaries.